What is H.264?

H.264, also known as Advanced Video Coding (AVC), is a video compression standard that is widely used for the efficient recording, compression, and distribution of video content. It is known for its high compression ratio which allows for high-quality video to be delivered at lower bitrates. This makes it ideal for streaming video over the internet, as well as storing high-definition video in formats like Blu-ray discs.

Let's look at an analogy to understand H.264 better: Think of H.264 like a highly skilled packer who is preparing a suitcase for a long trip. Just as this expert packer can fold clothes in a way that maximizes space and minimizes wrinkles, H.264 compresses video files effectively. It ensures that the video retains its quality (like the clothes staying neat) while reducing the file size significantly. This smaller "suitcase" or file is then easier and quicker to send over the internet, just as a well-packed suitcase is easier to carry and transport.

How Does H.264 Codec Work?

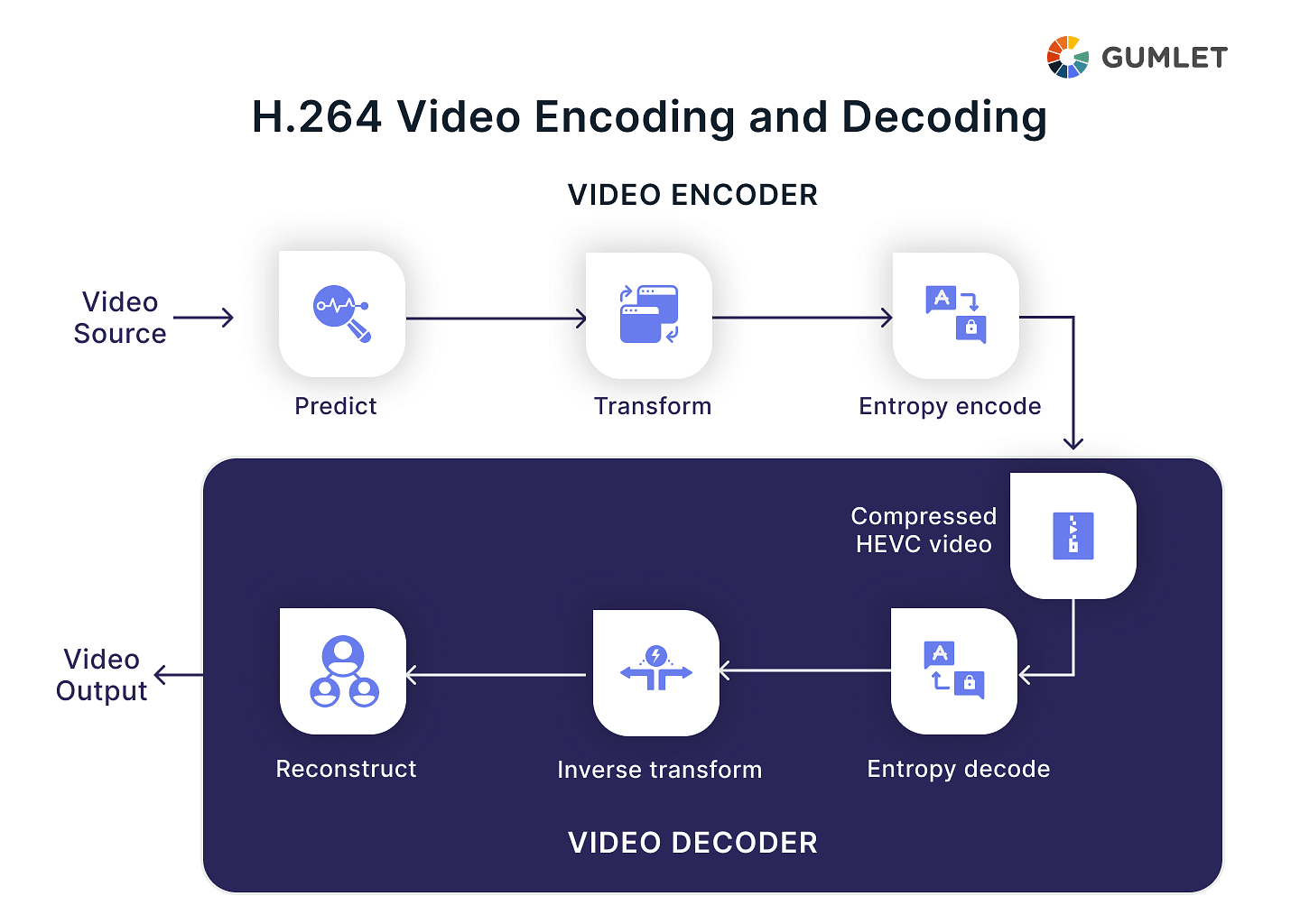

The H.264 video encoder carries out three important processes - prediction, transform, and encoding - to give a compressed H.264 bitstream. The decoder then carries out complementary processes - decoding, inverse transform, and reconstruction - to produce the decoded video stream. Let’s look at the H.264 encoder processes in detail:

- Prediction: The encoder predicts the current frame by analyzing previous frames (especially the one immediately before it). This is based on the idea that consecutive frames often contain similar information, like a background image with moving objects in front. Instead of storing the entire current frame, the encoder only sends the difference between the predicted frame and the actual frame.

- Transformation: The remaining difference (prediction error) is broken down into smaller spatial components using a mathematical technique. This allows for more efficient storage and manipulation of the data. Imagine dividing an image into tiny squares and representing the color variations within each square.

- Quantization: To further reduce file size, less significant data is discarded. This is a balancing act between achieving a smaller file size and maintaining acceptable video quality. Think of it like rounding off small numbers – it reduces precision but saves space.

- Entropy Coding: The remaining data is then encoded using a specific language to minimize the number of bits needed to represent it. This is similar to how Huffman coding works in zip files.

On the other side, the decoder works by complementing the steps of the encoder in the following way:

- Entropy Decoding: The compressed bitstream is decoded back into the quantized data.

- Inverse Transformation: The data is transformed back into the spatial domain.

- Reconstruction: The predicted frame is combined with the decoded data to recreate the original frame.

H.264 / AVC Overview

The H.264 codec was jointly developed by the MPEG (Moving Picture Experts Group) and ITU (International Telecommunication Unit). It was first published in 2003 as a part of a document titled “Recommendation H.264: Advanced Video Coding”. Here are some features and other essential overviews of H.264.

Features

H.264 compression reduces the size of the video container to about half of the original. In doing so, the H.264-based codecs don’t compromise on any quality. In terms of the features that make it capable of performing this feat, here are some:

- Slice structure coding: Slice can be understood as an array of macroblocks within one specific slice group. They provide distinct resynchronization points within video data and ensure that no intra-frame predictions take place on slice boundaries. This feature makes it possible for H.264 compression to reduce losses such as packet loss probability and visual degradation to the bare minimum.

- Flexible Macroblock ordering (FMO): This is a strategy for rescheduling the order of representation of macroblocks. This comes in extremely handy for error robustness, which has long-term positive impacts during video transmission.

- Data partitioning: This is another key feature of H.264, and it allows the separation of the header, motion information, and intra-information by distributing all the syntactic elements to network abstraction layer units.

- Intra-coding: Using intra-coding constrains the effect of packet loss for motion compensation. It also helps in terminating and reducing error propagation to a minimum.

- Switching pictures: This feature of H.264 allows predictive coding in situations where there is a difference in the reference signal. This feature can be used for adaptive error-resilient purposes, especially in wireless environments.

Let’s look at what profiles and levels are in the context of H.264 compression.

Compare your current transcoding levels against Gumlet's advanced per-title-encoding.

Profile and Levels in H.264

Profiles in H.264:

Profiles in H.264 are intended to optimize the codec for specific devices and applications, ensuring compatibility and efficiency. Here are some of the key profiles:

- Baseline Profile: Optimized for low-cost applications with limited computing resources, such as video conferencing and mobile video. It supports I-frames (intra-coded frames) and P-frames (predictive frames) but not B-frames (bi-predictive frames), which are more computationally intensive.

- Main Profile (MP): Used for standard-definition digital TV broadcasts and DVD storage, providing higher quality than the Baseline Profile. It adds support for B-frames, which improve compression efficiency.

- Extended Profile (XP): Designed for streaming video applications with additional features not found in the Main Profile, such as improved error recovery.

- High Profile (HiP): The most commonly used profile for high-definition digital TV broadcasts and Blu-ray discs. It includes support for 8x8 intra-prediction, custom quantization matrices, and additional tools for color depth, improving video quality at higher resolutions.

Levels in H.264:

Levels in H.264 ensure that encoded content meets specific requirements for resolution, bitrate, and frame rate, allowing consistent playback quality across devices with varying processing powers. Each level defines maximum values for several parameters:

- Maximum frame rate

- Maximum macroblock processing rate (macroblocks/sec)

- Maximum video bit rate

- Maximum buffer size

For example:

- Level 1.0: Supports up to 176x144 resolution at 15 fps (frames per second), suitable for low-end applications.

- Level 3.0: Supports up to 720x576 resolution at 30 fps, typical for standard-definition video.

- Level 4.0: Supports up to 1920x1080 resolution at 30 fps, suitable for high-definition video like that used in HDTV.

- Level 5.1: Supports up to 4096x2304 resolution at 60 fps, intended for ultra high-definition video such as 4K content.

The combination of profiles and levels in H.264 provides a structured way to describe the capabilities of an encoder/decoder, ensuring compatibility and performance across a wide range of devices and applications. This structuring makes H.264 extremely versatile and capable of meeting the needs of both low and high-end video applications.

Applications of H.264

Here's a glimpse into how H.264 codec is used in our digital world:

- Online Video Streaming: H.264 is the core technology used by platforms like YouTube, Netflix, and Twitch for online video streaming, enabling high-quality, efficient compression of videos at various resolutions, including HD.

- Video Conferencing: In video conferencing, maintaining video quality while minimizing latency and bandwidth usage is crucial. H.264 is capable of real-time video compression, which makes it ideal for video conferencing applications like Skype, Zoom, and Google Meet.

- Surveillance Systems: H.264 is a crucial technology in surveillance systems, enabling longer recording times and high-quality video transmission over limited bandwidth, making it ideal for continuous monitoring and recording systems.

- Video Recording and Editing: H.264 is commonly used in cameras and smartphones for video recording due to its balance between compression efficiency and quality retention, making it easy to store, manage, and edit on various software and devices.

- Blu-ray Discs: H.264 is a codec approved for Blu-ray discs, enabling efficient compression of high-definition video, ensuring more content is fit on a single disc.

- Drone and Action Cameras: H.264 is a popular video compression format used in drones and action cameras due to its high compression ratio and quality output.

Is H.264 codec better than other video codecs?

H.264 video compression intends to provide the best quality video at bit rates much lower than the other video codecs. It does all of it without increasing the complexity or reducing the robustness of the bitstream. This also makes H.264 a flexible format as it can be applied to a wide range of use cases and solve several problems.

Comparison with other Video Codecs

There are various other compression standards available, but the most common comparison of H.264 is with H.265, MPEG2, VP9, and AV1. Let’s briefly go over what these different codecs are and how to find the best out of these for your cause.

- H264 vs H265: H.265 or HEVC (High-Efficiency Video Coding): This is a successor of AVC and delivers up to 20-40% better compression efficiency with the improved or the same video quality. Like AVC, it supports 8K Ultra High Definition resolution but delivers comparatively smaller files, which makes it more efficient for streaming or long-term transmission. HEVC is designed with advanced video coding layers, parallel processing tools, and other important extensions.

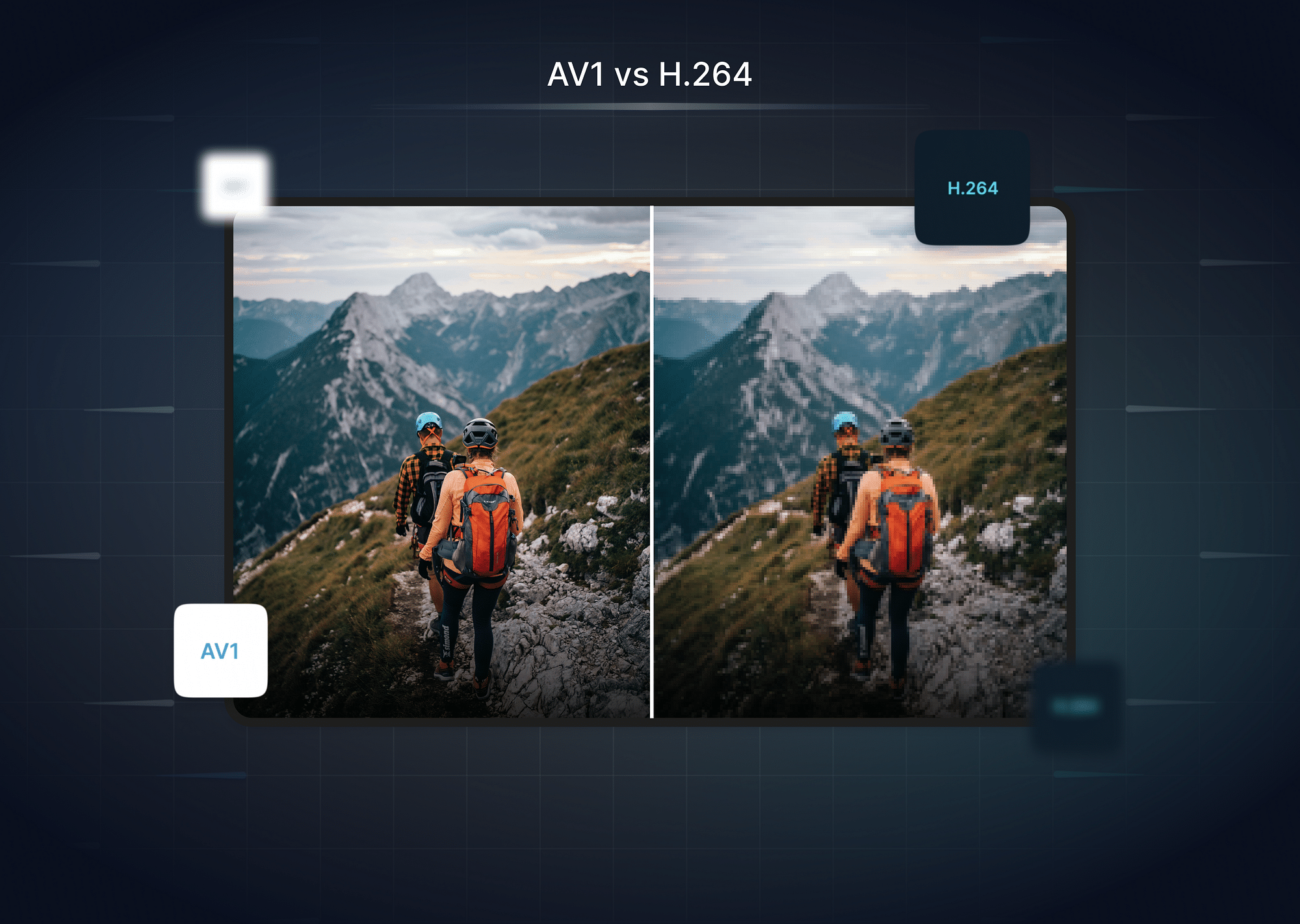

- AV1: Developed by AOM (Alliance for Open Media), AV1 is truly a next-generation video coding format. This codec improves HEVC’s encoding and decoding capabilities by 30% and uses low computational powers and quick hardware optimizations. This allows it to deliver the highest-quality real-time videos that can be scaled to any device. This codec uses much more advanced algorithms and is intended for use in WebRTC and HTML5 Web Video together with Opus audio codec format.

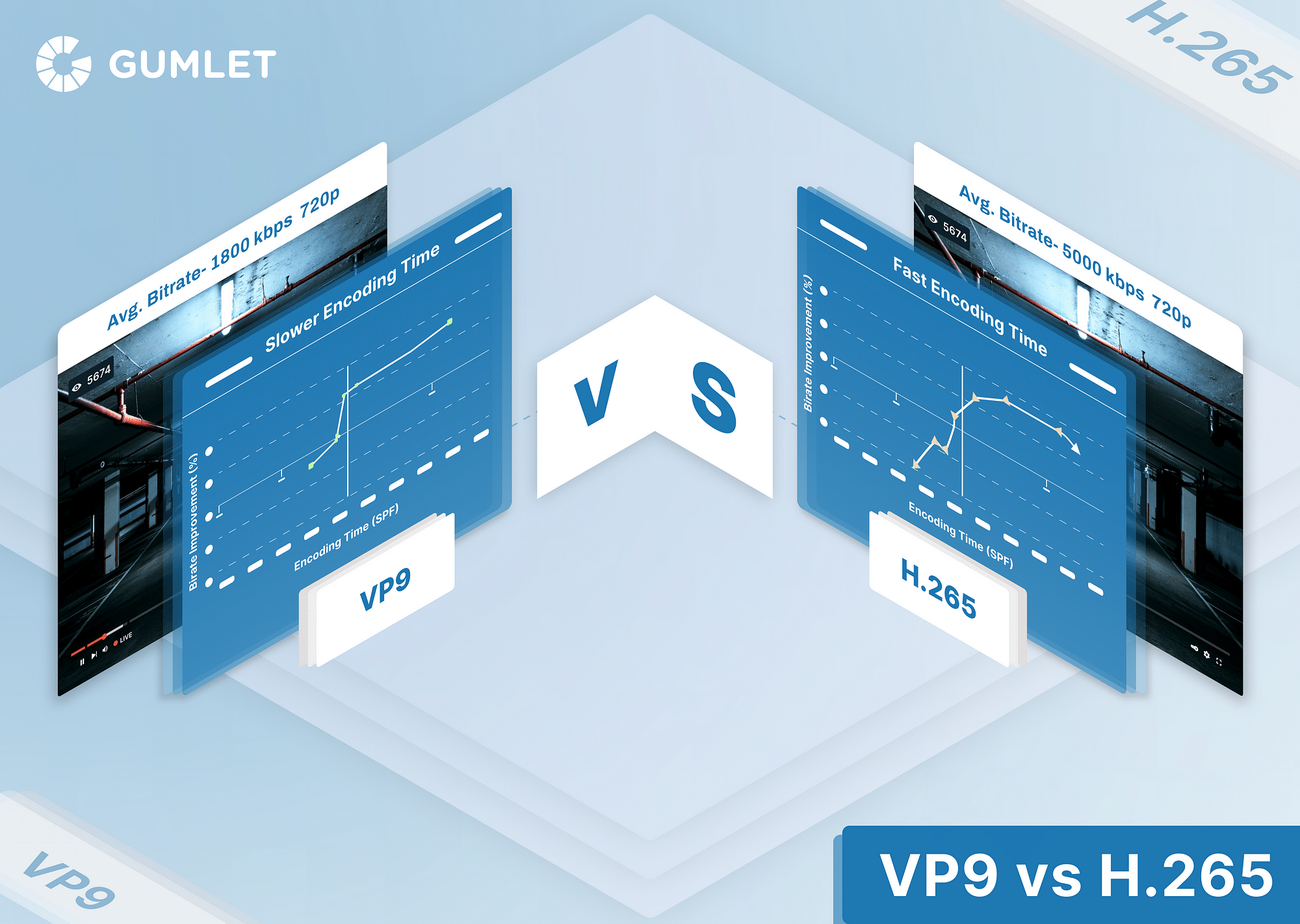

- VP9: This is a royalty-free alternative to H.265 and was developed by Google. Every video platform linked to Google in any way - from Chrome browser, Android phones to YouTube and more - supports VP9 codec. This offers better video quality at the same bitrate as H.264, which makes it effective for streaming and transmitting 4K HD videos online.

In terms of how H.264 compares with H.265, AV1, and VP9, you should keep in mind that H.264 is an older codec. With rapid advancements in technology, codecs have evolved over the years to tackle much more complicated and specific challenges. However, there are still ample use cases for each of the codecs - both old and new - depending on the device and bandwidth being used.

Benefits of H.264

To summarize, the benefits of H.264 include:

- High Compression Efficiency: H.264 provides significant improvements in compression efficiency compared to previous standards. This means it can compress video files to smaller sizes while maintaining high quality, reducing the amount of bandwidth and storage required.

- Good Video Quality at Low Bit Rates: It is particularly efficient at low bit rates, which is crucial for streaming over limited bandwidth networks without compromising too much on video quality. This makes it ideal for mobile streaming and other applications where bandwidth may be constrained.

- Flexibility across Various Networks and Devices: H.264 is designed to be flexible and adaptable for use across a wide range of network environments and devices. It supports various network-friendly features that allow videos to be efficiently streamed even over fluctuating network conditions.

- Widespread Compatibility: H.264 is supported by most software and hardware, including browsers, smartphones, tablets, video conferencing systems, and more. This universal support ensures that videos encoded in H.264 can be easily played back on almost any device.

- Robustness to Errors: It includes several features that make it robust against errors and packet loss, which can be common in less reliable networks like mobile data. This robustness improves the viewing experience by minimizing the impact of such network issues.

- Support for High Definition (HD) and Ultra High Definition (UHD): H.264 supports up to 8K resolution, allowing it to be used for HD, Full HD, and even UHD video. This has been essential for modern TV broadcasts and online video services that offer high-resolution video streaming.

- Scalability: H.264 supports scalability at different levels, including temporal, spatial, and quality scalability. This allows a single stream to be adapted for different display sizes and resolutions, enhancing the efficiency of video content delivery.

Is H.264 the Recommended Codec for Video Streaming?

H.264 is still a highly recommended codec for video streaming in many cases, though there are newer options to consider. Here's why it stands out:

- Broad Device Compatibility: H.264 is the most widely used codec, playable on virtually any device, from laptops and smartphones to smart TVs and game consoles. This makes it a safe choice for ensuring a large audience can watch your streams without compatibility issues.

- Efficient Compression: H.264 offers good quality video at smaller file sizes compared to older codecs. This translates to smoother streaming experiences, especially for viewers with limited bandwidth.

- Mature Technology: With years of development and refinement, H.264 encoding and decoding are well-established processes. This means readily available tools and reliable performance.

However, there are some things to keep in mind:

- Newer Codecs: H.265 (HEVC) offers even better compression for higher resolution streams, but its device compatibility isn't as universal as H.264.

- Encoding Complexity: Compared to some newer codecs, H.264 encoding can be more computationally demanding.

Here's a quick summary to help you decide:

- Choose H.264 if: Broad device compatibility is your top priority, you have viewers with limited bandwidth, or you need a well-supported and reliable codec.

- Consider newer codecs like H.265 if: You're streaming high-resolution content (4K or higher) and compatibility with older devices is less of a concern. You also have the processing power to handle more complex encoding.

Conclusion

Codec selection is one challenge that every media producer faces. In such a situation, only information can be your savior. If you are well informed about the various compression formats and the use cases that each works well on, you will make the right decision. H.264 codec is one such compression and decompression format that is widely used and that has completely redefined the way videos are shared and transmitted digitally.

FAQs

1. Is H.264 the same as MP4?

MP4 is a video container format, while H.264 is a video compression format (or codec) that requires a video container like MP4 to host the encoded video.

2. Is H264 high quality?

Yes, H 264 is a high-quality video compression format widely used for various applications. It is known for providing high-quality video at relatively low bit rates.

3. Is H264 good for 4K?

Yes, H.264 is a good codec for 4K video and is widely used for this purpose.

- How to play H264 files?

You can play H264 files by using a media player like VLC Media Player or 5K Player that supports H.264. You can also install a codec pack. These can be a good option if you want to use your existing media player but it doesn't natively support H.264. Choose a reputable source, such as the K-Lite Codec Pack.